Published Apr 02, 2026 • 5 min

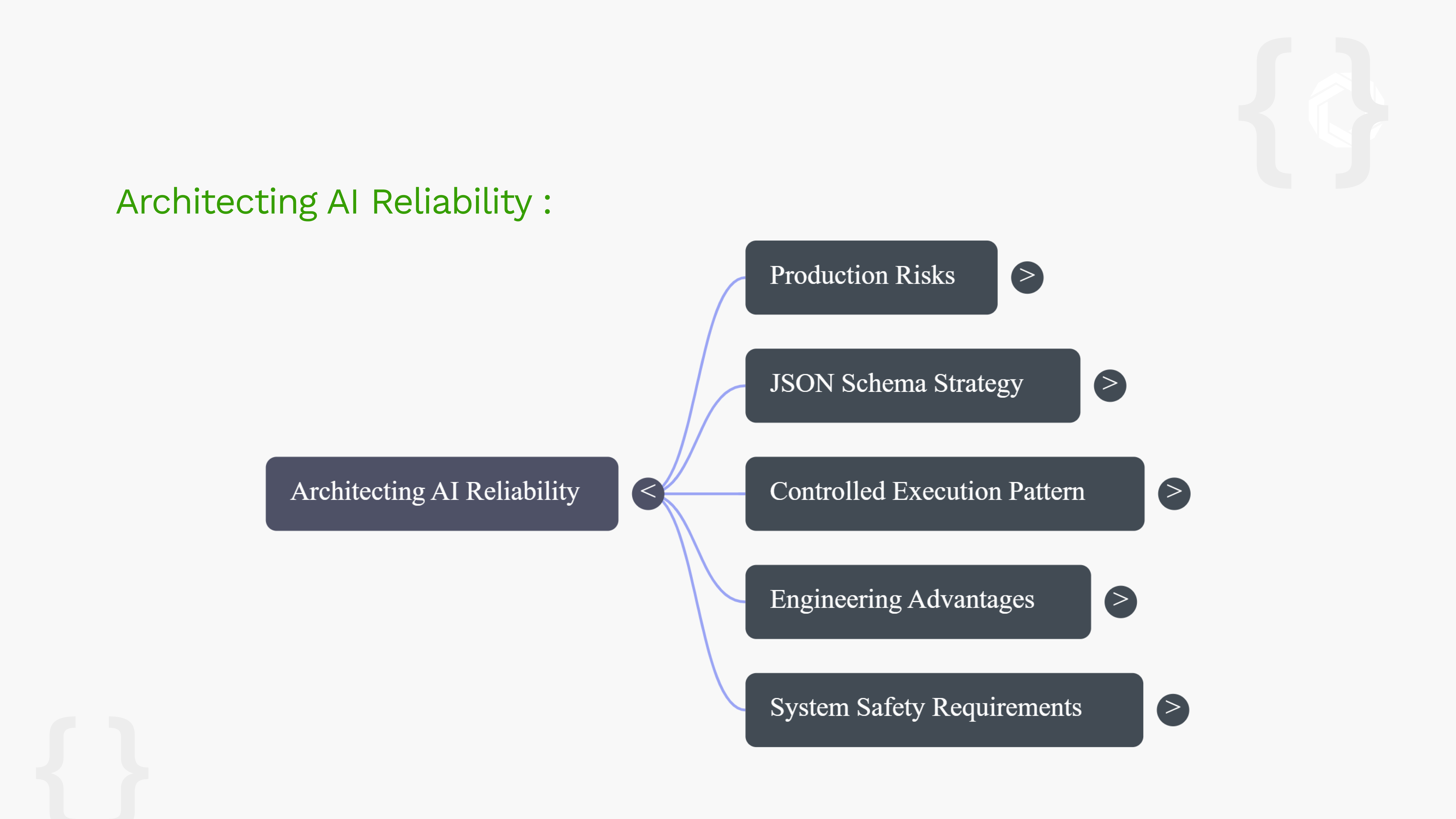

In operational systems, the real difficulty is rarely generating more code. The challenge is constraining generation so outputs are structurally valid, operationally safe, and executable within well-defined system boundaries.

Most hallucinations in AI code generation do not originate from model limitations alone. They originate from underspecified outputs. When outputs are loosely defined, the model fills gaps probabilistically, inventing unsupported parameters, unsafe sequences, or ambiguous execution paths that the system was never designed to handle.

A schema does more than describe structure. It defines what outputs are allowed.

Instead of asking a model to produce open-ended code, the system requires it to produce JSON that conforms to a predefined contract. That contract specifies fields, types, enums, required values, nesting rules, and execution constraints. The result is a significantly narrower and more governable generation surface, and a validation gate the system can enforce before anything is executed.

Without output constraints, most AI systems follow a pattern of: Model → generated code → execution.

With a schema enforcement layer, the architecture becomes:

Model → structured intent → schema validation → policy checks → controlled execution

The schema itself does not guarantee safety. But it provides a critical enforcement point where systems can verify structure and intent before actions are allowed to proceed. For higher-risk workflows, this shift significantly reduces ambiguity and makes AI behaviour easier to audit, constrain, and reason about.

Explicit contracts. Validated inputs. Typed interfaces. Minimal trust at system boundaries.

JSON Schema introduces a machine-verifiable layer of intent between the model and the runtime. Systems can automatically validate structure, enforce interface expectations, and reject non-conforming outputs before execution begins, the same discipline applied to any production API or data pipeline.

The more consequential the action, the less acceptable free-form generation becomes. Safe AI systems will not be built on model confidence alone. They will be built on carefully designed constraints and enforceable system guarantees.