Published Apr 29, 2026 • 6 min

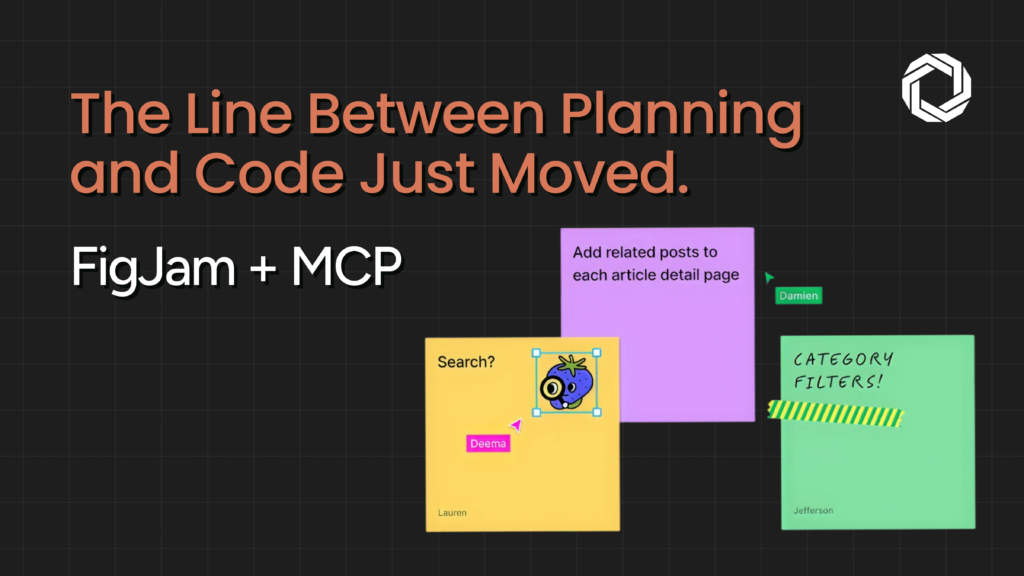

Most agentic coding setups optimize for execution speed, getting from prompt to pull request as fast as possible. The planning step is usually a text prompt, a ticket description, or at best a comment thread. None of those are shared, visual, or structured enough to be reviewed before the agent runs. The result: agents implement the wrong thing quickly, and engineers spend their review time untangling misaligned architecture rather than validating correct code. This isn’t a model quality problem. It’s a workflow problem, and it needs a different kind of fix.

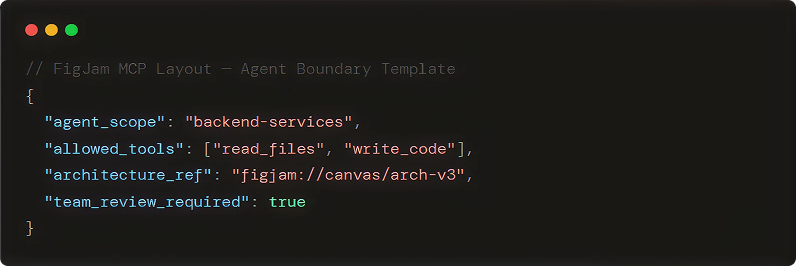

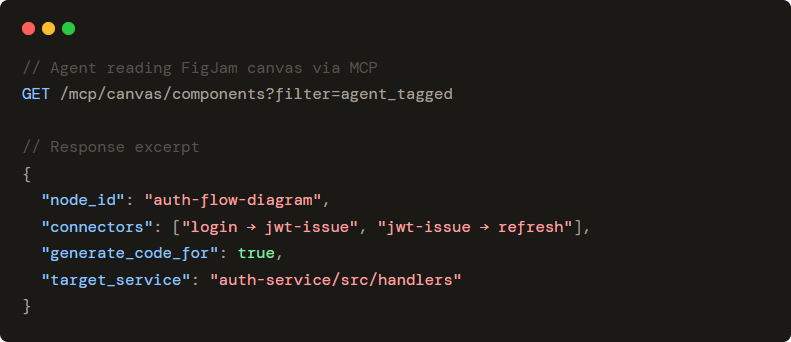

Figma’s MCP skill layer gives coding agents the ability to read from and write to FigJam directly, creating boards, placing diagrams, and updating planning artifacts as part of their standard workflow. This isn’t a Figma plugin or a UI shortcut. It’s a protocol-level integration: agents call FigJam tools the same way they call code execution or search tools. The canvas becomes part of the agent’s context, not a separate handoff. That distinction matters. Shared context that lives in a visual workspace is fundamentally easier for human reviewers to engage with than shared context that lives in a prompt history.

In step one, the agent doesn’t write code, it builds a plan. It uses FigJam to map system components, data flows, dependencies, and decision points into a structured architecture diagram. Engineering teams can see exactly what the agent is proposing before any implementation begins. This shifts the review conversation from “why did the agent build it this way?” to “does this plan match our intent?”, which is a dramatically cheaper conversation to have. Catching a misaligned dependency in a diagram takes minutes. Catching it in code takes a sprint.

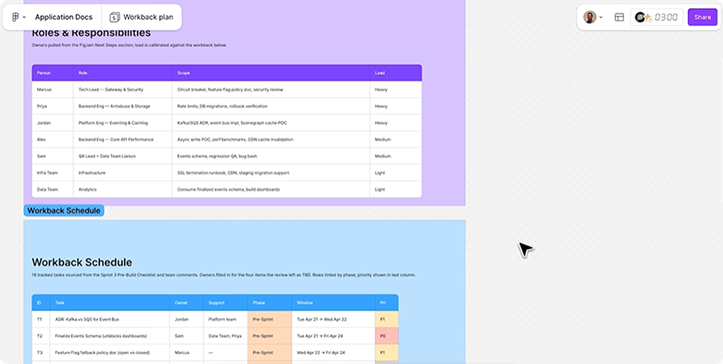

Step two is where the human–agent loop becomes real. FigJam is already where design and product teams operate, adding agents to the same canvas means engineering review happens in a space the whole team already uses. Reviewers annotate diagrams, flag concerns, and approve the architecture before the agent moves forward. Agents can receive structured feedback and revise the plan without losing context. By the time implementation starts, there’s alignment, not assumption. That’s the gap most agentic pipelines don’t close until something breaks in production.

In step three, the agent moves from canvas to codebase, but the plan it built in FigJam remains a live reference. Agents can access their own planning artifacts throughout implementation, which matters most in long-context or multi-agent workflows where architectural drift is a real failure mode. The canvas acts as a persistent shared state, something both human reviewers and agents can reference simultaneously. For teams already running parallel agent tracks, this is the kind of shared context layer that keeps independently-running agents from drifting apart.

The FigJam MCP integration is not just a Figma update, it is a reference model for how coding agent planning with FigJam should work across any engineering team’s agentic stack. At Techlusion, we’ve seen the planning gap cost teams more time than any model quality issue. The teams that close it earliest, with structured, reviewable, visual planning before execution, are the ones where human–agent collaboration actually compounds. Figma’s MCP approach is one of the first production-grade implementations of that principle. The architecture is extensible: the same skill layer that powers FigJam planning can be applied to prototyping feedback, design-to-code generation, and developer handoff. Teams evaluating agentic infrastructure should treat this as a reference model, not just a product update.