Published Apr 09, 2026 • 5 min

Weavy, founded in Tel Aviv in 2024, built a browser-based creative platform that brought leading AI models together with professional editing tools on a single node-based canvas. In under a year it had attracted a community spanning independent creatives, fast-growing startups, and Fortune 100 enterprise teams, cinematographers using it for VFX, architects for staging imagery, marketers for social content, designers for product and brand assets.

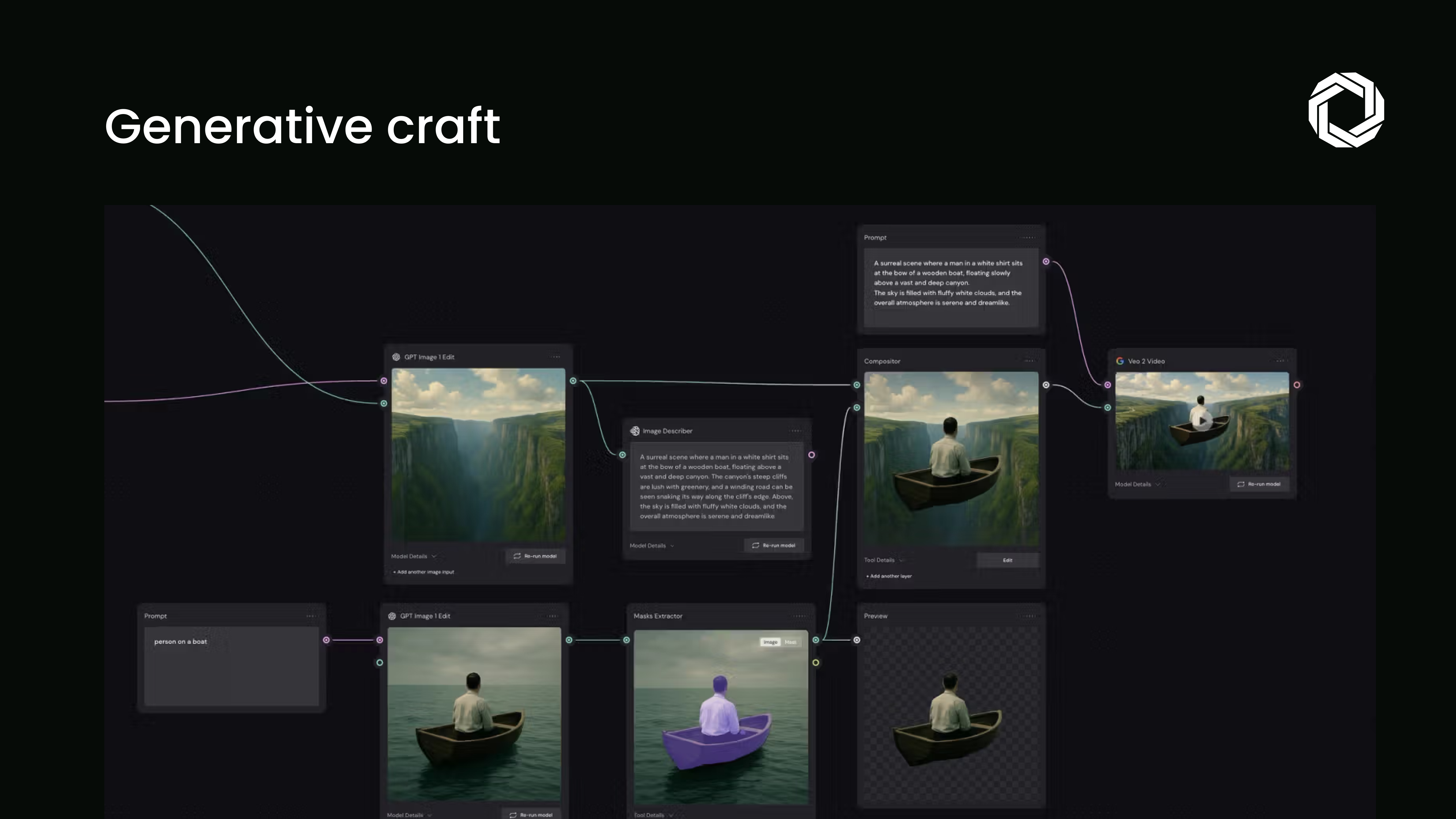

The platform’s core strength was its approach to generation: not prompt-and-done, but a composable pipeline where outputs feed the next step. Adjust lighting, mask an object, colour grade a shot, all as part of the same workflow that generated the asset in the first place.

As Figma Weave, that capability now sits inside the world’s most widely used product design platform.

Figma Weave brings AI-native image, video, animation, motion design, and VFX generation and editing into the Figma platform. Teams can select the right model for each task, Seedance, Sora, or Veo for cinematic video; Flux or Ideogram for realism; Nano-Banana or Seedream for precision, and compose outputs using the node-based workspace.

The node-based approach is the architectural decision worth paying attention to. Outputs are not terminal. They are branching points, each generation feeds into editing, each edit feeds into the next generation. The result is a media pipeline that keeps human craft in the loop at every stage rather than replacing it with a single model output.

From a product architecture standpoint, this acquisition is significant for one clear reason: it expands the definition of what a design tool is responsible for.

Figma has spent over a decade becoming the standard environment for UI, UX, and product design collaboration. Weave extends that surface into full creative media production, the kind of output that previously required separate tools, separate teams, and separate workflows. Bringing it into a single platform, with a composable pipeline rather than a disconnected set of generation buttons, is a fundamentally different proposition.

For engineering and product leaders, the practical implication is this: the creative production layer of your product workflow is about to collapse into the same environment your design team already works in. That changes how you staff, how you hand off assets, and how quickly concepts move from idea to production-quality output.

The Weavy community before the acquisition was not just early adopters experimenting with prompts. Architects were generating staging imagery for client presentations. VFX artists were building media and effects pipelines for television and film. Marketing teams were producing video and banner assets for social at scale. Design teams were generating assets for product mocks, brand systems, and prototyping.

These are production workloads, not experiments. The fact that Weavy had reached that level of real-world usage in under a year, before a Figma distribution advantage, signals what the combined platform is likely capable of at scale.

The line between design tool and media studio is closing. That is the directional signal this acquisition sends, and it is consistent with where AI is pushing creative tooling more broadly.

The teams that will benefit most from Figma Weave are not the ones who treat generation as a shortcut. They are the ones who treat it as a starting material, something to mold, refine, and combine with human judgment to reach outputs that a prompt alone could not produce. That distinction, between generation as destination and generation as medium, is exactly what Figma’s philosophy for this acquisition articulates. And it is the right framework for how serious product teams should be thinking about AI in their creative workflows.